- Joined

- Oct 5, 2008

- Messages

- 130,051

- Likes

- 150,587

- Points

- 115

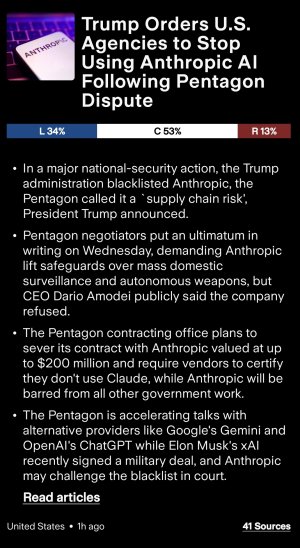

The Pentagon asked two major defense contractors on Wednesday to provide an assessment of their reliance on Anthropic's AI model, Claude — a first step toward a potential designation of Anthropic as a "supply chain risk," Axios has learned.

Why it matters: That penalty is usually reserved for companies from adversarial countries, such as Chinese tech giant Huawei.

Reality check: Asking suppliers to analyze their own reliance on Claude and report back to the Pentagon is a lot different than immediately forcing them to cut ties. It's possible this is more brinksmanship on the Pentagon's side to try to convince Anthropic to fold.

Why it matters: That penalty is usually reserved for companies from adversarial countries, such as Chinese tech giant Huawei.

- Using it to punish a leading American tech firm, particularly one on which the military itself is currently reliant, would be unprecedented.

- Boeing Defense, Space and Security, a division of Boeing, has no active contracts with Anthropic, a spokesperson said.

- A Boeing executive told Axios: "We sought their partnership [in the past] and ultimately could not come to an agreement. They were somewhat reluctant to work with the defense industry."

- A Lockheed spokesperson confirmed the company was contacted by the Defense Department regarding an analysis of its exposure and reliance on Anthropic ahead of "a potential supply chain risk declaration."

- The Pentagon plans to reach out to "all the traditional primes" — meaning the major contractors that supply things like fighter jets and weapons systems — about whether and how they use Claude, a source familiar told Axios.

- The Pentagon is impressed with Claude's performance, but furious that Anthropic has refused to lift its safeguards and let the military use it for "all lawful purposes."

- Anthropic insists, in particular, on blocking Claude's use for the mass surveillance of Americans or to develop weapons that fire without human involvement.

- The Pentagon insists it's unworkable to have to clear individual use cases with Anthropic.

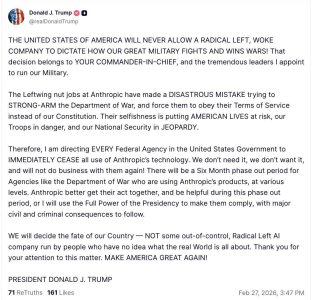

- After that, Hegseth warned, the administration would either use the Defense Production Act to compel Anthropic to tailor its model to the military's needs, or else declare the company a supply chain risk.

- While Anthropic could theoretically challenge it in court, invoking the DPA would let the military maintain access to Claude.

- Wednesday's outreach suggests the military is leaning toward a supply chain risk designation.

- The spokesperson did not comment on the potential supply chain risk designation.

- The Pentagon told Axios it was "preparing to execute on any decision that the secretary might make on Friday regarding Anthropic."

- Referring to the possible supply chain risk designation earlier this week, a senior Defense official told Axios: "It will be an enormous pain in the ass to disentangle, and we are going to make sure they pay a price for forcing our hand like this."

Reality check: Asking suppliers to analyze their own reliance on Claude and report back to the Pentagon is a lot different than immediately forcing them to cut ties. It's possible this is more brinksmanship on the Pentagon's side to try to convince Anthropic to fold.

- But Anthropic has been insistent up to now that it will not back down on surveillance or autonomous weapons, two areas Amodei has personally raised when discussing the dangers of AI.

- The supply chain risk designation could be a significant blow if a number of companies that work with the government remove Claude from their operations.

- However, Anthropic could see some benefit in being viewed by potential customers and staffers as the company that stood its ground amid concerns of an AI arms race.

- Google and OpenAI, whose models are already available in unclassified systems, are also in negotiations about moving into the classified space.

- One source familiar with those discussions described Claude as the most capable model in a number of military use cases, but described Google's Gemini as a strong alternative.

- The Pentagon insists Google and OpenAI would have to lift their safeguards to get those contracts.